New AI developments are coming thick and fast. Which AI trends should we watch out for and how will they revolutionise our relationship with AI technology?

The future of AI is being shaped by game-changing innovations, from breakthroughs in data retrieval to downsizing to smaller yet more powerful AI models. Daniel Sohn, Legal Counsel at Aleph Alpha — a startup often dubbed the German rival to Open AI — shares his lowdown on AI trends poised to make a big impact.

AI trend one: Innovation boosts data retrieval

Most businesses sit on vast data lakes, comprising valuable nuggets of commercial, financial and legal information. Retrieving and using that data purposefully within AI systems is an almighty challenge. But two exciting AI developments are about to make it much easier.

Fine-tuning: Essentially, further education for pre-trained large language models (LLMs), fine-tuning uses smaller and more targeted datasets to either change the LLM’s behaviour or teach it new skills in order to perform specific tasks.

Retrieval-augmented generation (RAG): RAG leverages existing data, without removing it from the data pool. It matches it to user input prompts to enhance the quality of LLM outputs. RAG is, says Daniel, the tech alternative to sending your intern to the library to access contextual information. “For the first time, we’re getting access to the library that sits at the backend of our IT systems,” he explains. “RAG is the gamechanger that means you can leverage both structured and unstructured data from existing silos yet leave it exactly where it is.”

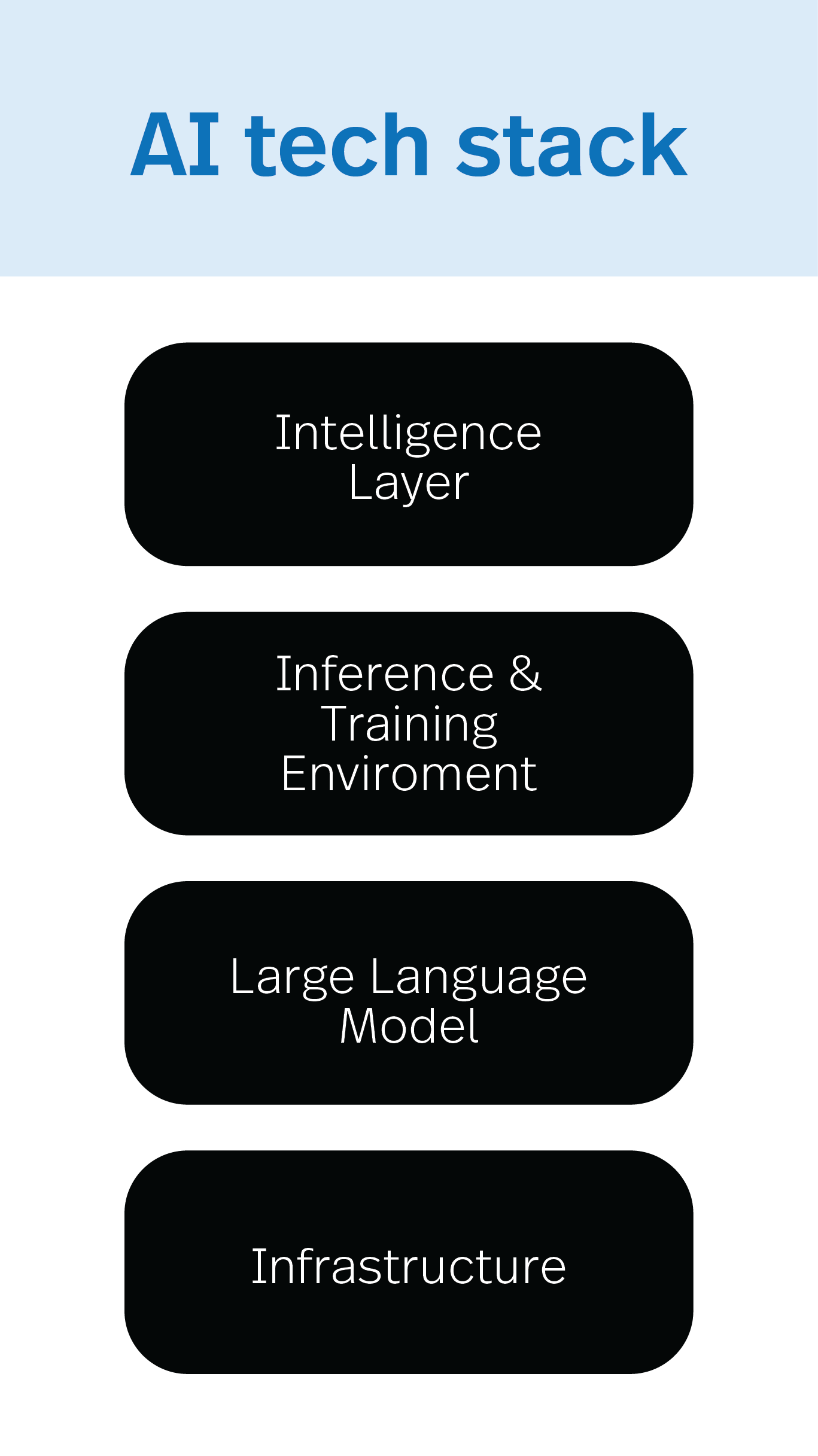

AI trend two: Growth in tech stacks

Expect AI providers to grow their tech stacks with customisable add-ons to leverage data.

At the base of the modern AI stack is infrastructure, consisting of hardware and basic computing resources. Infrastructure may be sited on company premises or delivered as an As-A-Service capability, via a cloud-based application programming interface (API).

The LLM layer may include graphics processing units (GPUs) that speed up computational processes, such as deep learning. Despite ongoing computer-chip shortages, US tech firm Nvidia is pushing ahead, building powerful GPUs for statistical calculations to run LLMs.

An inference and training environment makes up the third layer, allowing the LLM to connect and interact with other technologies, like RAG for data retrieval.

The intelligence layer sits on top of the stack. It comprises software development tool kits for building applications and for leveraging the capabilities of the underlying LLM and infrastructure. Mistral AI, a French start-up, is seeking to revolutionise how AI services are deployed and integrated with a fine-tuning toolkit. It simplifies complex processes and customises AI solutions, via an API, to organisations’ unique needs.

This structured and stackable approach means organisations can efficiently build and scale their AI systems at a pace and scope that works for them.

AI trend three: AI use cases drive model selection

A shift is underway from horizontal platform products, aimed at users from across multiple sectors, to greater “verticalization of LLMs”.

Daniel explains: “Vertical LLMs are trained to understand the nuanced language of a specific sector, like legal, and can be further fine-tuned to address enterprise-specific AI-related tasks and needs.”

Increasingly, organisations are turning to use cases to identify how AI developments can improve efficiencies or drive innovation within their own operations. Specialist or industry-specific use cases can help to cement procurement decisions, providing assurances that investment in a particular model may deliver operational returns.

There are commercial opportunities for vendors. Cloud computing platform Microsoft Azure, for instance, offers a catalogue of more than one thousand LLMs to choose from, according to Daniel. He expects service advisers to emerge in the space to help with model selection.

AI trend four: Future of AI lies in smaller but more powerful models

Large language models, like GPT-4, consume lots of energy. A trend to smaller, less energy-hungry models will cut consumption and costs, in line with environmental, social and governance (ESG) objectives. It will further reduce architecture footprints, without compromising efficiency and speed.

As computational effort (measured in FLOPS — floating-point operations per second) required for training is related to the size of the LLM, downsizing FLOPS will likely reduce compliance thresholds under the EU AI Act too.

The AI space is fast-moving. These are just some of the innovations making an impact. Pioneering AI developments and emerging trends, coupled with dynamic regulatory changes, will continue to shape and reshape the future AI landscape.

_11zon.jpg?crop=300,495&format=webply&auto=webp)

_11zon.jpg?crop=300,495&format=webply&auto=webp)

_11zon.jpg?crop=300,495&format=webply&auto=webp)

_11zon.jpg?crop=300,495&format=webply&auto=webp)

_11zon.jpg?crop=300,495&format=webply&auto=webp)

_11zon_(1).jpg?crop=300,495&format=webply&auto=webp)