On 28 September 2022 the EU Commission published:

- Proposed amendments to Product Liability Directive (PLD), which will include Artificial Intelligence (AI)

- New AI Liability Directive (AILD)

These proposals now sit alongside the EU’s proposed EU AI Act (AIA).

According to the EU, these proposals will 'adapt liability rules to the digital age', particularly in relation to AI.

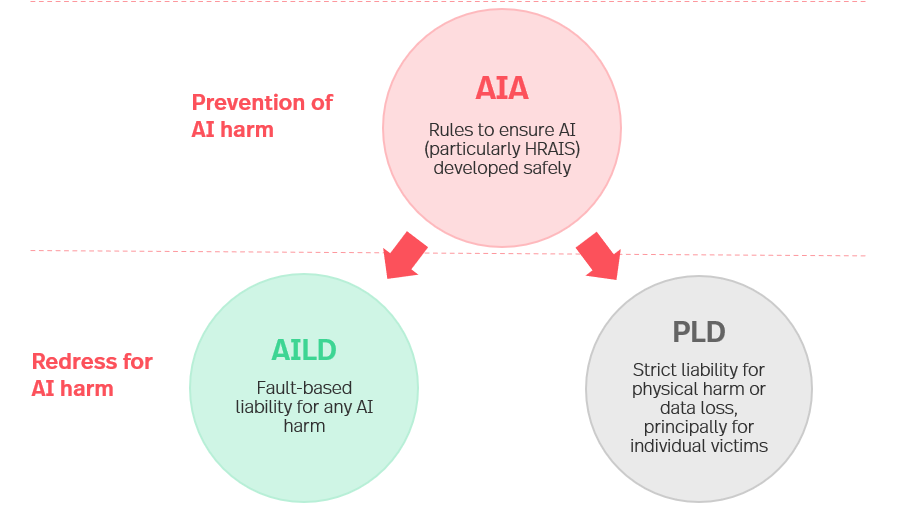

In this article, we summarise this new tripartite regime and explain how these three laws fit together.

EU AI Liability Regime: background to the proposals

- In the February 2020 White Paper on AI, the European Commission President, Ursula von der Leyen, laid out a coordinated European approach on AI to both promote innovation and its uptake, but also to address the risks associated with its use.

- On 21 April 2021, the EU Commission proposed the AIA. That focuses on safety and prevention of AI harm, whereas the PLD and AILD focus on redress following harm caused by AI. The three laws are intended to operate as a tripartite model where safety and liability are two sides of the same coin.

- The Commission’s reasons for the new developments

- We may even see a 'European convention on artificial intelligence, human rights, democracy and the rule of law' in due course, which would supplement the AIA but potentially be open to non-EU member states to join.

AI Act

Summary: the AIA proposes a risk-based approach comprising prohibited AI uses, 'high-risk' AI systems (HRAIS) and other AI uses.

The EU is focused on protecting citizens from AI harm: the AIA focuses on harm prevention (i.e. ensuring AI is developed safely), rather than cure (which is the focus of PLD and AILD).

The majority of the AIA deals with substantive and procedural requirements for HRAIS. It is consistent with the EU product safety framework (which is lens through which EU views AI), with a greater regulatory burden on developers rather than users. The AIA also lays down enforcement framework.

Timing: the AIA is expected to come into force late 2023/2024, with a two year grace period to allow organisations to comply.

Product Liability Directive

Summary: existing PLD comprises a strict liability regime giving redress to consumers who suffer certain types of harm from a defective product. The PLD has an extraterritorial effect as non-EU manufacturers can be held indirectly liable for defective Products through authorised representatives and importers.

Major changes proposed by EU:

- The definition of 'product’ is expanded to include intangible items e.g. software, including AI systems.

- A claimant with a plausible claim can seek an order for a defendant to disclose relevant evidence. Failure to comply results in a presumption of the defect.

- ‘Defect’ can take into account the 'effect on the product of any ability to continue to learn after deployment', future-proofing the regime against increasingly sophisticated AI models which learn and develop post-deployment.

- Definition of ‘damage’ is expanded to include the 'loss and corruption of data' provided it is not used exclusively for professional purposes.

- If a claimant faces excessive difficulties in proving the defect and/or causation due to technical or scientific complexity, then the defect / causation can be presumed on the basis of sufficiently relevant evidence.

- Current 10 year longstop for claims extended from time of any substantial modifications, likely including software updates.

Timing: Member States to bring the amended PLD into law one year after the modifying directive is passed.

For more detail, please see our separate note on the proposed new PLD.

AI Liability Directive

Summary: the AILD provides common rules for a non-contractual, fault-based liability regime for damage caused by AI, particularly HRAIS. The AILD has an extraterritorial effect as it applies to the providers and/or users of AI systems that are available on or operating within the Union market.

Origin: the EU sees difficulty and uncertainty in claims for AI harm under the current fault-based liability regimes due to complexity, autonomy and opacity of AI. The AILD is intended to 'adapt private law to the needs of the transition to the digital economy' and make it easier to bring claims for harm caused by AI.

Key provisions

Article 1 (Scope): clarifies that the regime is intended to compensate for damage caused intentionally or negligently

Article 2 (Definitions): ensures consistent definitions with the AIA; clarifies that claim can be brought by a subrogated party (e.g. insurer) or representative (e.g. on behalf of class). The EU expressly contemplates class actions in AILD Explanatory Memorandum; clarifies that claim can be brought by an individual or legal entity against another individual or legal entity

Article 3 (Disclosure of evidence and rebuttable presumption of breach of duty for HRAIS):

- to help claimants identify potentially liable defendants and relevant evidence for damage caused by HRAIS, the court may order a provider or user to disclose necessary and proportionate evidence about HRAIS suspected of having caused damage (subject to safeguards around confidential information)

- where a defendant fails to comply with an order to disclose or preserve evidence in a claim for damages, there is a rebuttable presumption that the defendant breached the relevant duty of care

Article 4 (Rebuttable presumption of causation for HRAIS and non-HRAIS):

- rebuttable presumption of causal link between defendant fault and act or omission of AI system giving rise to the damage where: (a) there is a breach of duty (proven or presumed), (b) it is reasonably likely that the fault has influenced the relevant act or omission of the AI system, and (c) the claimant proves that such act or omission has given rise to damage

- rebuttable presumption does not apply to HRAIS where 'sufficient evidence and expertise is reasonably accessible for the claimant to prove a causal link'

- rebuttable presumption only applies to non-HRAIS where it is excessively difficult for the claimant to prove the causal link

- where it concerns a damages claim against a HRAIS provider, a breach of duty is only established if, taking into account HRAIS risk management system, the provider breached their AIA obligations relating to data, transparency, human oversight, accuracy, robustness and cybersecurity, or the provider failed to take corrective actions to remedy another breach or withdraw / recall a HRAIS

- where a damages claim against a HRAIS user, a breach of duty is established if the user breached its obligation to use / monitor AI system in accordance with accompanying instructions of use or, where appropriate, suspend or interrupt its use

Timing: Member States to bring the AILD into law two years after the AILD passed.

The tripartite model

The AIA focusses on safety and the prevention of harm associated with AI systems. In contrast, the PLD and AILD provide different but overlapping routes for redress following harm caused by AI systems. All three laws are designed to fit together into a single tripartite model, as shown above, with AIA and AILD/PLD on two sides of the same coin.

What should organisations do now?

The PLD and AILD will now go through the EU legislative process (which the AIA is already going through). If adopted, each Member State will need to implement the PLD and AILD into their respective national law.

Despite the fact that these are only draft laws, it is important for organisations to start thinking now about compliance. These regimes (the AIA in particular) require steps to be taken at the AI development stage, so any AI systems being developed or procured now should take account of these regimes in order to ensure future compliance.

The AILD makes compliance with the AIA even more crucial. We are starting to see organisations undertake risk assessment against the AIA (i.e. establishing whether their AI use cases are subject to the AIA and, if so, whether they are HRAIS) and implement appropriate governance (e.g. AI inventories, processes to ensure compliance etc.). We can assist with this.

Aside from compliance, various other issues should be considered e.g. contractual protection (especially for any AI procurement) and insurance.

Our AI Group is experienced in advising businesses on legal, regulatory and ethical issues arising out of the use of AI. Please contact us if you would like to discuss these developments with us.

Interested in finding out more?

Download the full report including case studies.